XingWu

XingWu

XingWu

XingWu

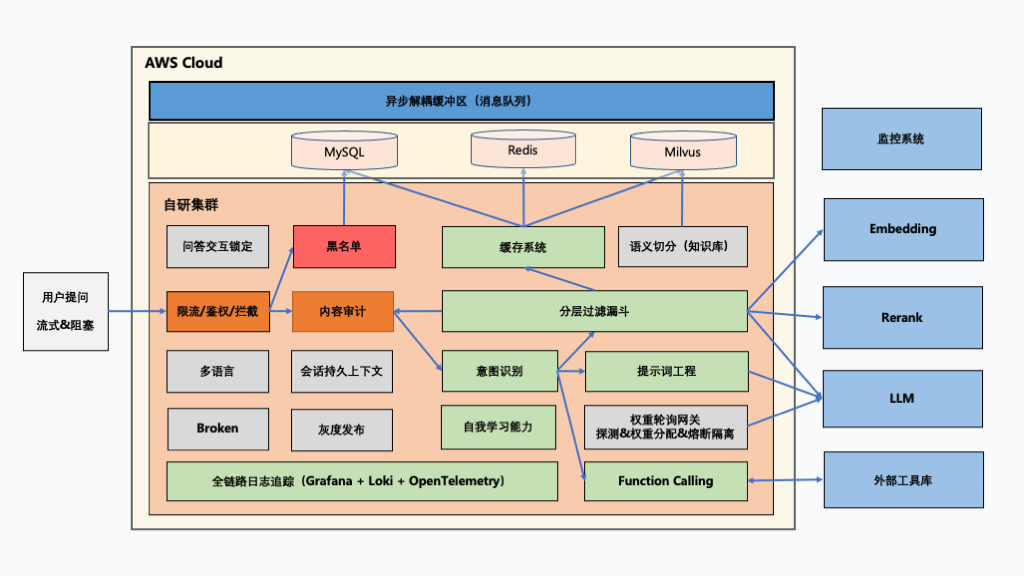

Private AI Engine · High Concurrency and Availability · Seamless Connection to Global Models

Deploy independently within enterprise environments, fully controllable with deep customization and internal/external API integration.

Supports self‑built semantic vector databases and 3rd‑party knowledge base integration to protect data isolation, security, and compliance.

Stores and loads multi‑turn context with customizable depth to ensure coherent dialogues and logical continuity.

Includes a self‑evolution mechanism; new knowledge can be reviewed and stored to continuously improve response accuracy.

From cache and regex to embedding and Rerank, up to model reasoning — optimized across all layers for better efficiency.

Ensures stability and low latency under high concurrency, providing long‑term reliability for core business systems.

Automatically detects input/output languages and assigns model weights dynamically for efficient orchestration and resource governance.

XingWu Engine integrates multi‑model scheduling, knowledge management, traffic governance, and security auditing to build a flexible intelligent center.

The hierarchical retrieval architecture ensures extreme performance — from cache hits to model inference, selecting the optimal strategy for accurate responses.

Supports automatic monitoring and failover for an observable and scalable enterprise AI foundation.

Runs intelligent customer service based on enterprise knowledge bases, supporting multi‑language Q&A and private data domains.

Provides stable and consistent responses through massive interactions.

Flexible integration with enterprise systems and business logic via open interfaces and plugin mechanisms.